Dream 7B

Introducing Dream 7B, the most powerful open diffusion large language model to date.

Team: Jiacheng Ye*, Zhihui Xie*, Lin Zheng*, Jiahui Gao*, Zirui Wu, Xin Jiang, Zhenguo Li, and Lingpeng Kong.

Affiliations: The University of Hong Kong, Huawei Noah’s Ark Lab

Introducing Dream 7B

In a joint effort with Huawei Noah’s Ark Lab, we release Dream 7B (Diffusion reasoning model), the most powerful open diffusion large language model to date.

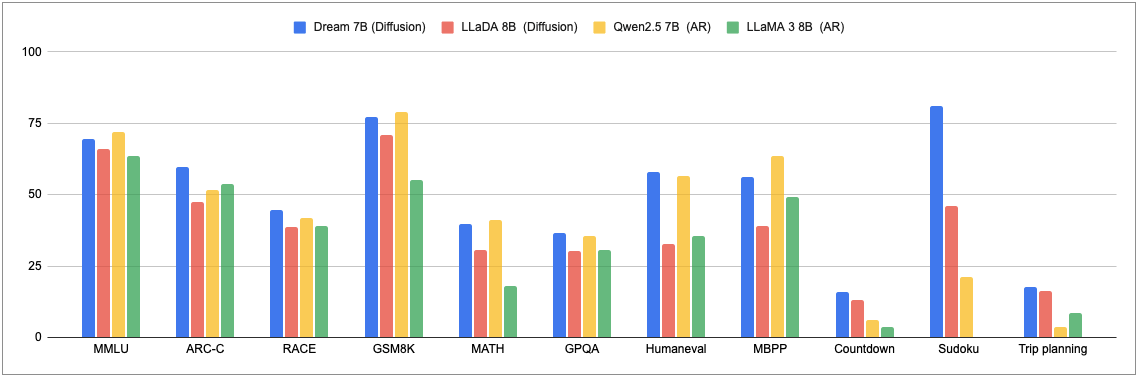

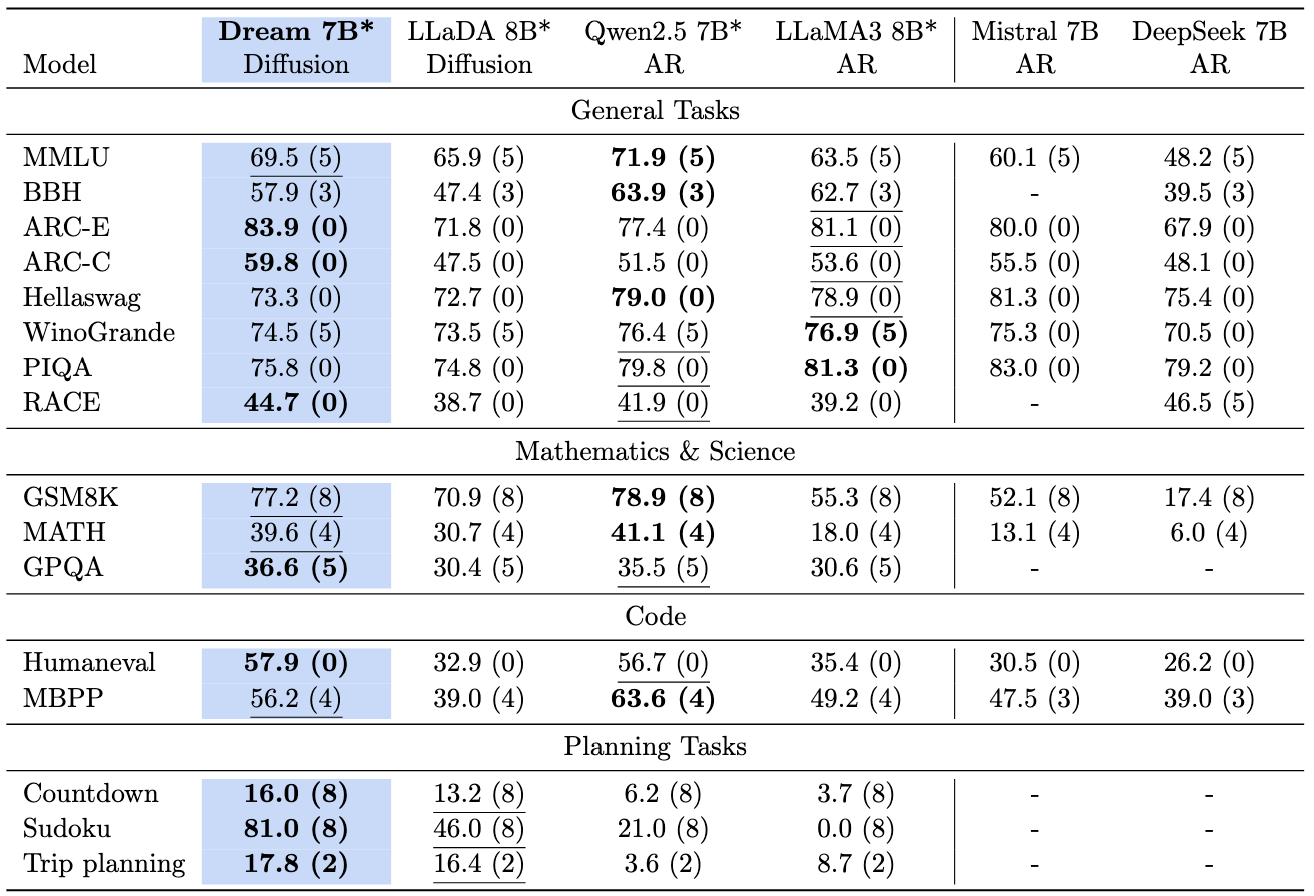

In short, Dream 7B:

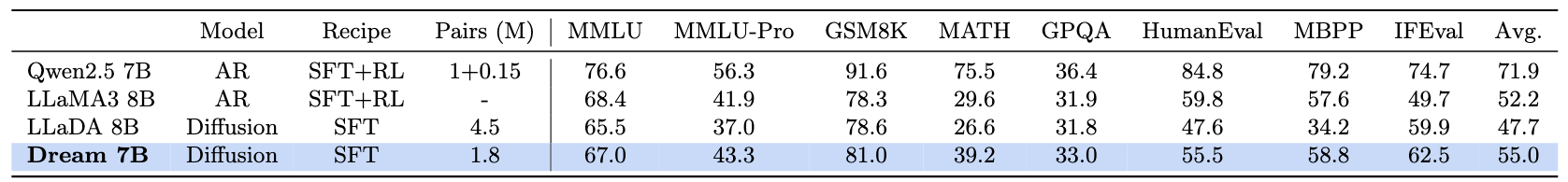

- consistently outperforms existing diffusion language models by a large margin;

- matches or exceeds top-tier Autoregressive (AR) language models of similar size on the general, math, and coding abilities;

- demonstrates strong planning ability and inference flexibility that naturally benefits from the diffusion modeling.

We release the weights of the base and instruct models in:

- Base model: Dream-org/Dream-v0-Base-7B

- SFT model: Dream-org/Dream-v0-Instruct-7B

- Codebase: GitHub

Why Diffusion for Text Generation?

The rapid advancement of large language models (LLMs) has revolutionized artificial intelligence, transforming numerous applications across industries. Currently, autoregressive (AR) models dominate the landscape of text generation, with virtually all leading LLMs (e.g., GPT-4, DeepSeek, Claude) relying on this same sequential left-to-right architecture. While these models have demonstrated remarkable capabilities, a fundamental question emerges: what architectural paradigms might define the next generation of LLMs? This question becomes increasingly relevant as we observe certain limitations in AR models at scale, including challenges with complex reasoning, long-term planning, and maintaining coherence across extended contexts

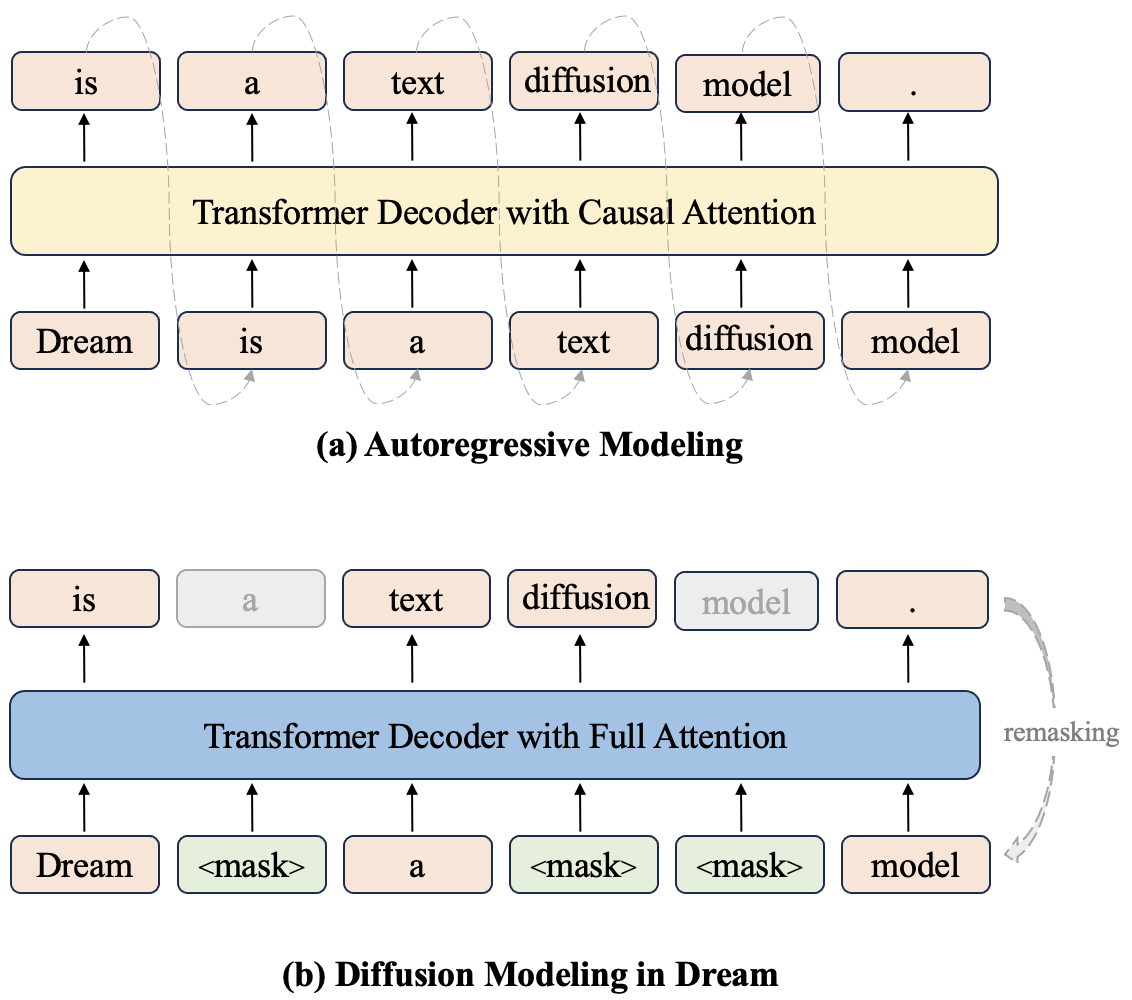

Discrete diffusion models (DMs) have gained attention as a promising alternative for sequence generation since their introduction to the text domain

- Bidirectional contextual modeling enables richer integration of information from both directions, substantially enhancing global coherence across the generated text.

- Flexible controllable generation capabilities arise naturally through the iterative refinement process.

- Potential for fundamental sampling acceleration through novel architectures and training objectives that enable efficient direct mapping from noise to data

.

Recently, significant advancements have highlighted diffusion’s growing potential in language tasks. DiffuLLaMA

Training

Dream 7B builds upon our team’s prior effort

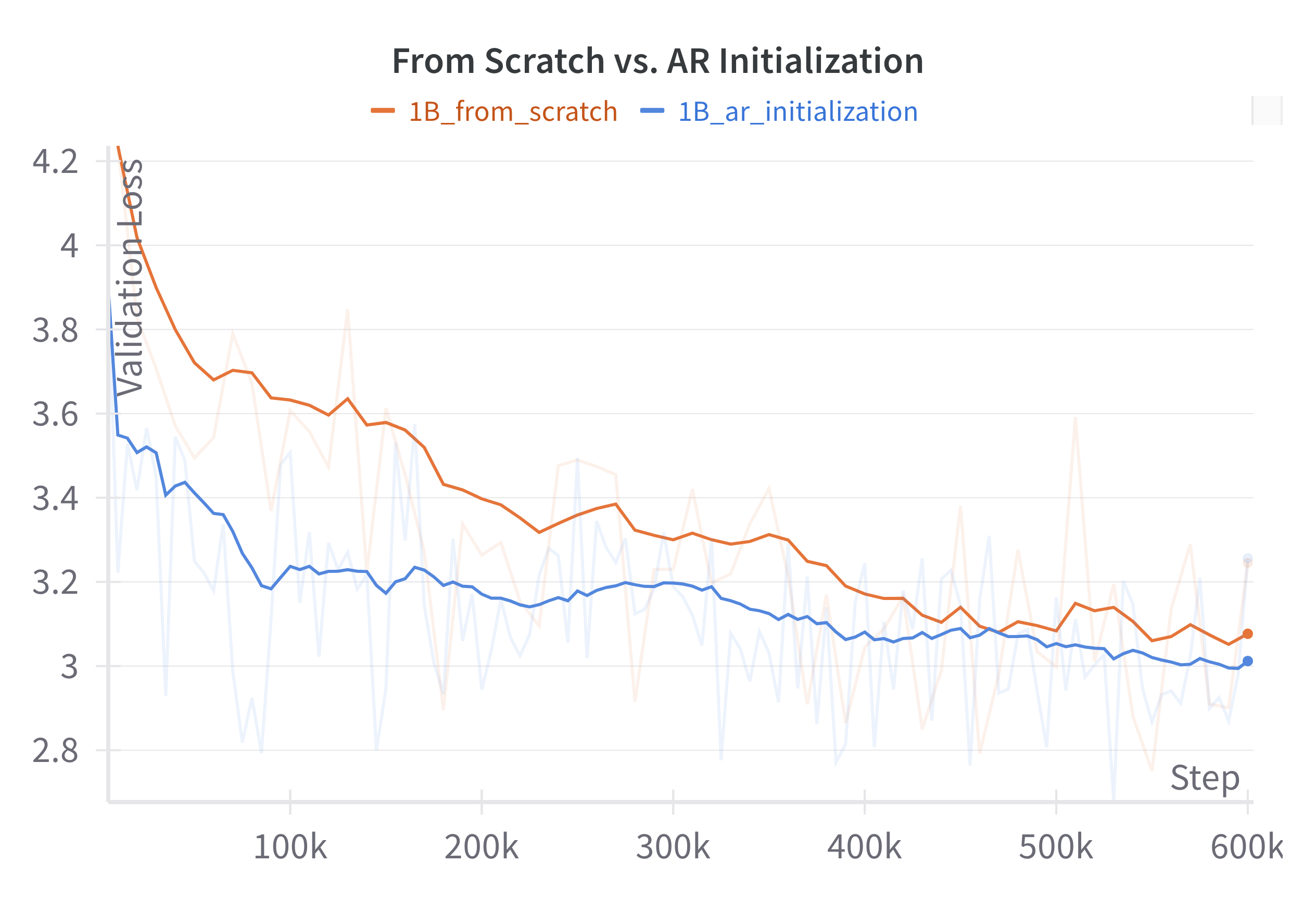

We extensively studied the design choices on the 1B level and identified many valuable components, such as weight initialization from AR models (e.g., Qwen2.5

AR initialization

Building on our previous work DiffuLLaMA

Dream 7B is finally initialized with weights from Qwen2.5 7B. During the training process, we find the learning rate to be especially important. If it’s set too high, it can quickly wash away the left-to-right knowledge in the initial weights, providing little help in the diffusion training, while if it’s set too low, it can hinder diffusion training. We meticulously selected this parameter along with the other training parameters.

Thanks to the existing left-to-right knowledge in the AR model, the diffusion model’s any-order learning can be accelerated, significantly reducing the tokens and computation required for pretraining.

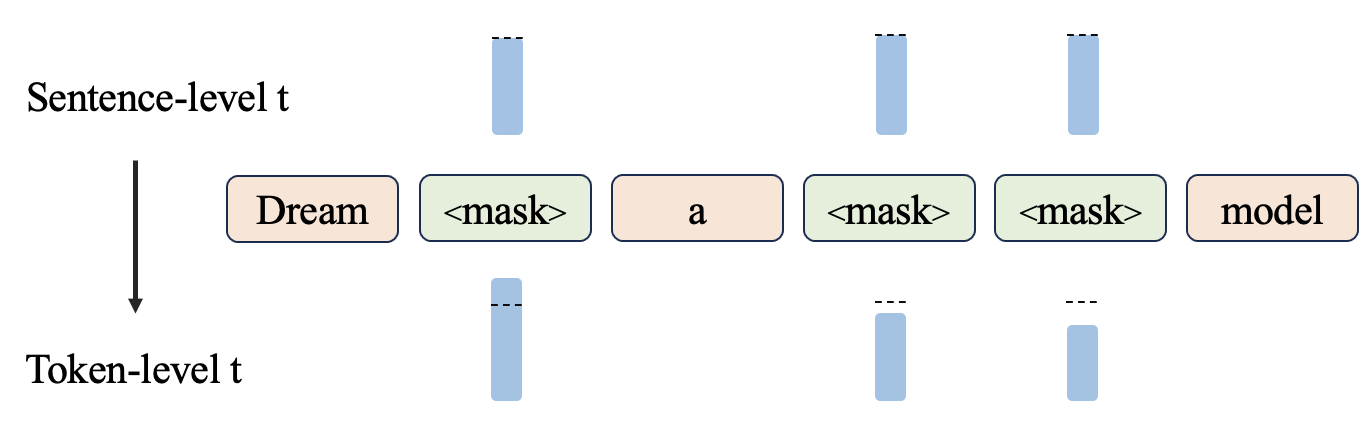

Context-adaptive Token-level Noise Rescheduling

The selection of each token in a sequence depends on its context, yet we observed that previous diffusion training approaches fail to adequately account for this aspect. Specifically, in conventional discrete diffusion training, a timestep t is sampled to determine the sentence-level noise level, after which the model performs denoising. However, since the learning ultimately operates at the token level, the actual noise level for each token does not strictly align with t due to the application of discrete noise. This resulted in ineffective learning of tokens with varying levels of contextual information.

To address this, we introduce a context-adaptive token-level noise rescheduling mechanism that dynamically reassigns the noise level for each token based on the corrupted context after noise injection. This mechanism provides more fine-grained and precise guidance for the learning process of individual tokens.

Planning Ability

In our previous work

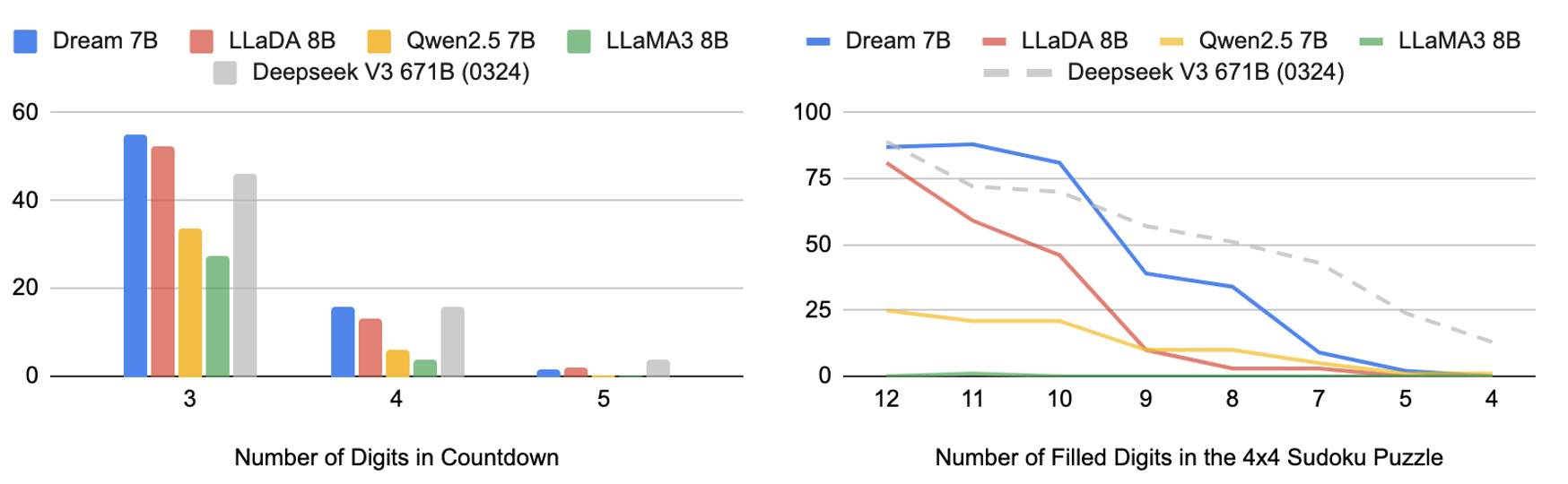

We evaluated Dream on the Countdown and Sudoku tasks from

It is evident that Dream outperforms other similar-sized baseline models. Remarkably, both diffusion models significantly surpass the two AR models and, at times, even the latest DeepSeek V3, despite its orders of magnitude more parameters. The intuition behind is that diffusion language models are more effective for solving problems with multiple constraints or for achieving specific objectives.

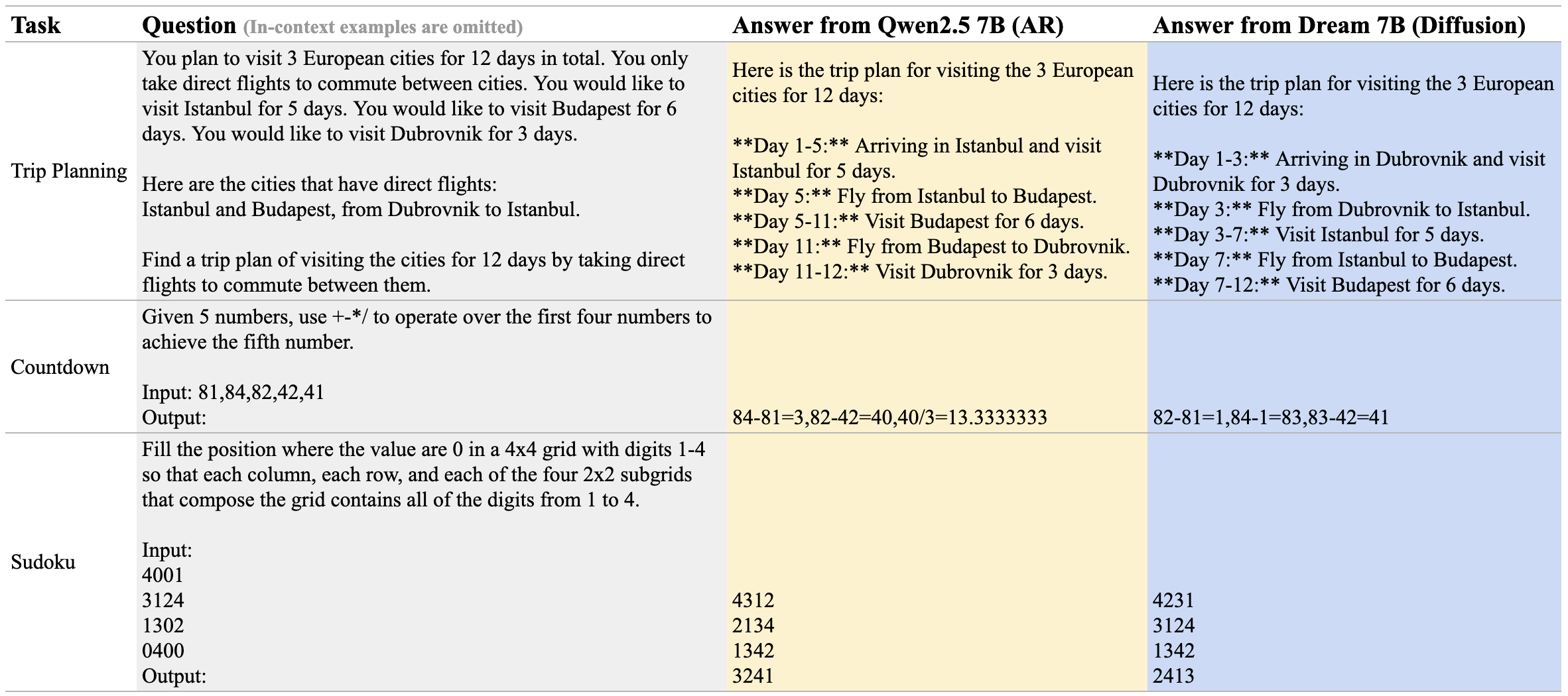

Here are some examples of Qwen 2.5 7B and Dream 7B in three planning tasks:

Inference Flexibility

Diffusion models offer more flexible inference compared to AR models in the following two main aspects.

Arbitrary Order

Diffusion models are not constrained to sequential (e.g., left-to-right) generation, enabling outputs to be synthesized in arbitrary orders—this allows for more diverse user queries.

- Completion

- Infilling

-

Controlling the decoding behavior

Different queries may have preferences for the order in which the responses are generated. One can also adjust the decoding hyperparameters to control the decoding behavior, shifting it from more left-to-right like an AR model to more random-order generation.

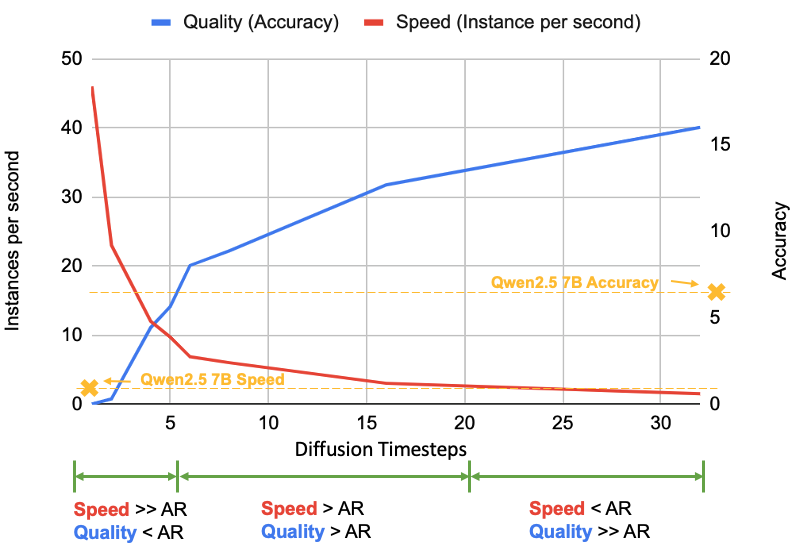

Quality-speed Trade-off

In the above cases, we show one token is generated per step. However, the number of generated tokens per step (controlled by diffusion steps) can be adjusted dynamically, providing a tunable trade-off between speed and quality: fewer steps yield faster but coarser results, while more steps produce higher-quality outputs at greater computational cost. This introduces an additional dimension for inference-time scaling

Supervised Fine-tuning

As a preliminary step in post-training diffusion language models, we perform supervised fine-tuning to align Dream with user instructions. Specifically, we curate a dataset with 1.8M pairs from Tulu 3

Conclusion

We introduce Dream, a new family of efficient, scalable, and flexible diffusion language models with carefully selected training recipes. It performs comparably to the best autoregressive models of similar size in general, mathematical, and coding tasks while especially showcasing advanced planning abilities and flexible inference capabilities.

Citation

@article{ye2025dream,

title={Dream 7B: Diffusion Large Language Models},

author={Ye, Jiacheng and Xie, Zhihui and Zheng, Lin and Gao, Jiahui and Wu, Zirui and Jiang, Xin and Li, Zhenguo and Kong, Lingpeng},

journal={arXiv preprint arXiv:2508.15487},

year={2025}

}