Polaris V2

Introducing Polaris-V2-Qwen3-4B and Polaris-V2-Qwen3-235B-A22B, the most powerful open-recipe reasoning models to date.

POLARIS V2: Pushing the Limits of Open Reasoning Models with Limited Resources

Team: Chenxin An *, Xiaonan Li, Zhaoye Fei, Jiacai Liu, Zhenyu He, Zhihui Xie, Jiazheng Zhang, Lei Li, Shansan Gong, Ming Zhong, Jingjing Xu *, Xipeng Qiu, Mingxuan Wang, Lingpeng Kong

*: Project Leads

Affiliations: The University of Hong Kong, Bytedance Seed, Fudan University

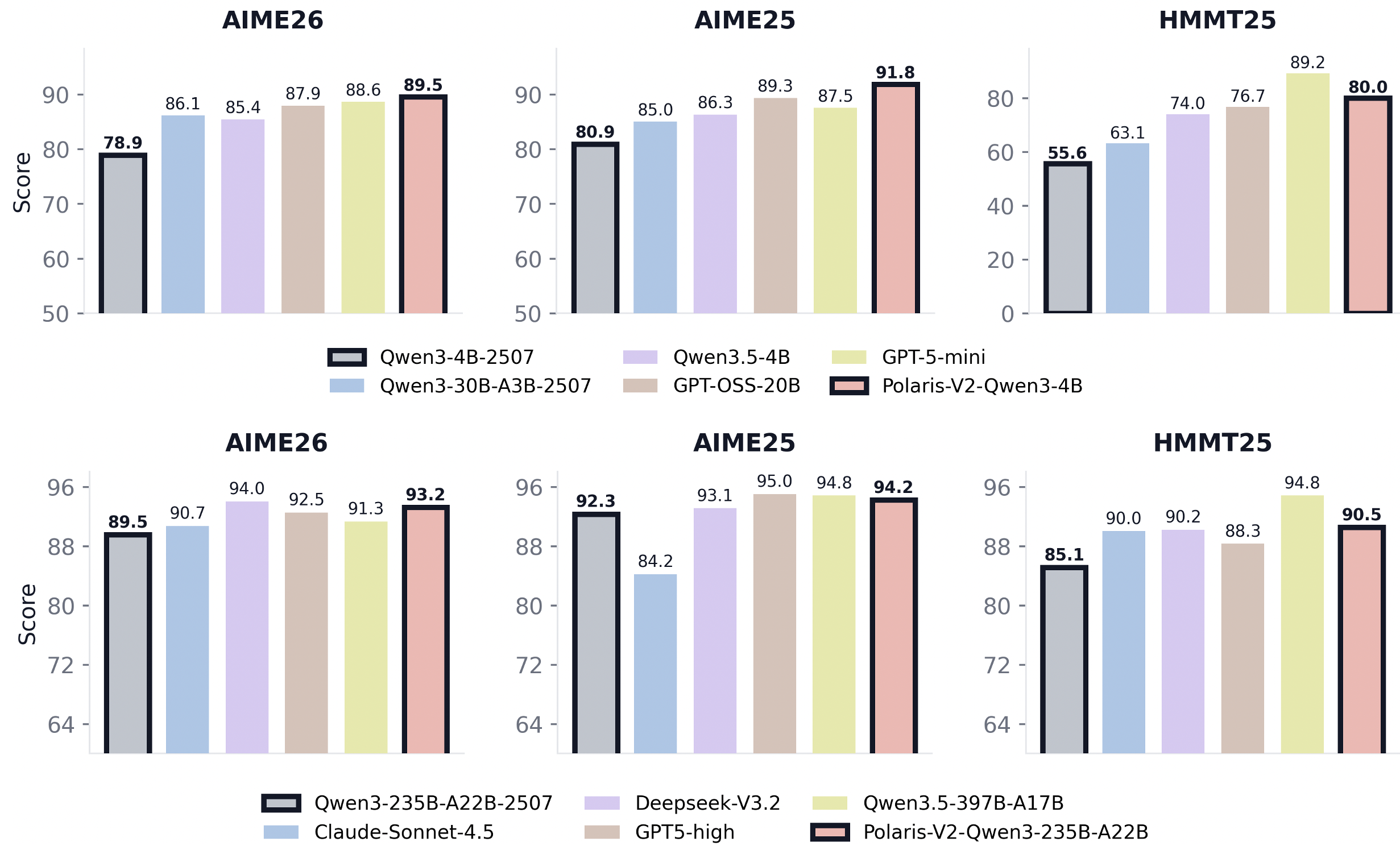

We are excited to introduce our latest breakthroughs, Polaris-V2-Qwen3-4B and Polaris-V2-Qwen3-235B-A22B, pushing open reasoning to a new level with limited resources. Polaris-V2-Qwen3-4B is trained from Qwen3-4B-2507, while Polaris-V2-Qwen3-235B-A22B is finetuned from Qwen3-235B-A22B-2507. Our 4B model achieves an impressive 89.5 Pass@1 on AIME26, and our 235B model reaches 93.2 Pass@1 on AIME26, outperforming leading models such as Claude-Sonnet-4.5, GPT-5-high, and Qwen3.5-397B-A17B. In Polaris V2, we propose CORE, an effective RL scaling algorithm and data-filtering principle that gives our 4B model a surprising boost. We further show that an expert small model can improve state-of-the-art large 235B models through weak-to-strong generalization.

To accelerate progress in open reasoning models, we are open sourcing our dataset, code, and training details for the research community.

👨💻 Github | 🤗 HF Model | 🤗 HF Dataset | 📖 paper | 🔎 Evaluation results

POLARIS V2

As RL scaling techniques have matured, state-of-the-art open-source reasoning models have already undergone careful post-training, making it increasingly difficult for the research community to obtain further gains by simply continuing to scale RL on top of them. Inspired by the Specialist Distillation approach introduced in DeepSeek-V3.2, we adopt the following paradigm in Polaris V2: we first explore novel RL scaling algorithms and data construction strategies on a small 4B model at a low cost, pushing its reasoning capabilities to a new frontier, and then transfer the reasoning patterns learned by the small model to a 235B model via distillation.

In the following, we describe the training strategies for the 4B and 235B models in detail. All training hyperparameters, training setup, and inference scripts are available in our open-source codebase.

1. 4B Model Training

Process-Level Reward Estimation without an Explicit PRM

A key limitation of GRPO-style training with an outcome reward model (ORM) is that supervision is provided only at the trajectory level: the model receives a binary signal based solely on the correctness of the final answer. Prior work has repeatedly shown that process-level supervision can substantially improve reinforcement learning from feedback by stabilizing optimization and reducing reward sparsity. However, training a dedicated process reward model (PRM) is practically difficult.

In Polaris-V2, we propose Consensus Offline Reward Estimation (CORE), a simple method to estimate process-level reward without an explicit PRM. The core intuition is that correct trajectories for the same problem often pass through similar intermediate results, such as key lemmas or derived equations, which together form a larger consensus set. Built from verified correct trajectories, this set can then be used to estimate the process quality of a new trajectory based on how much its intermediate reasoning aligns with it.

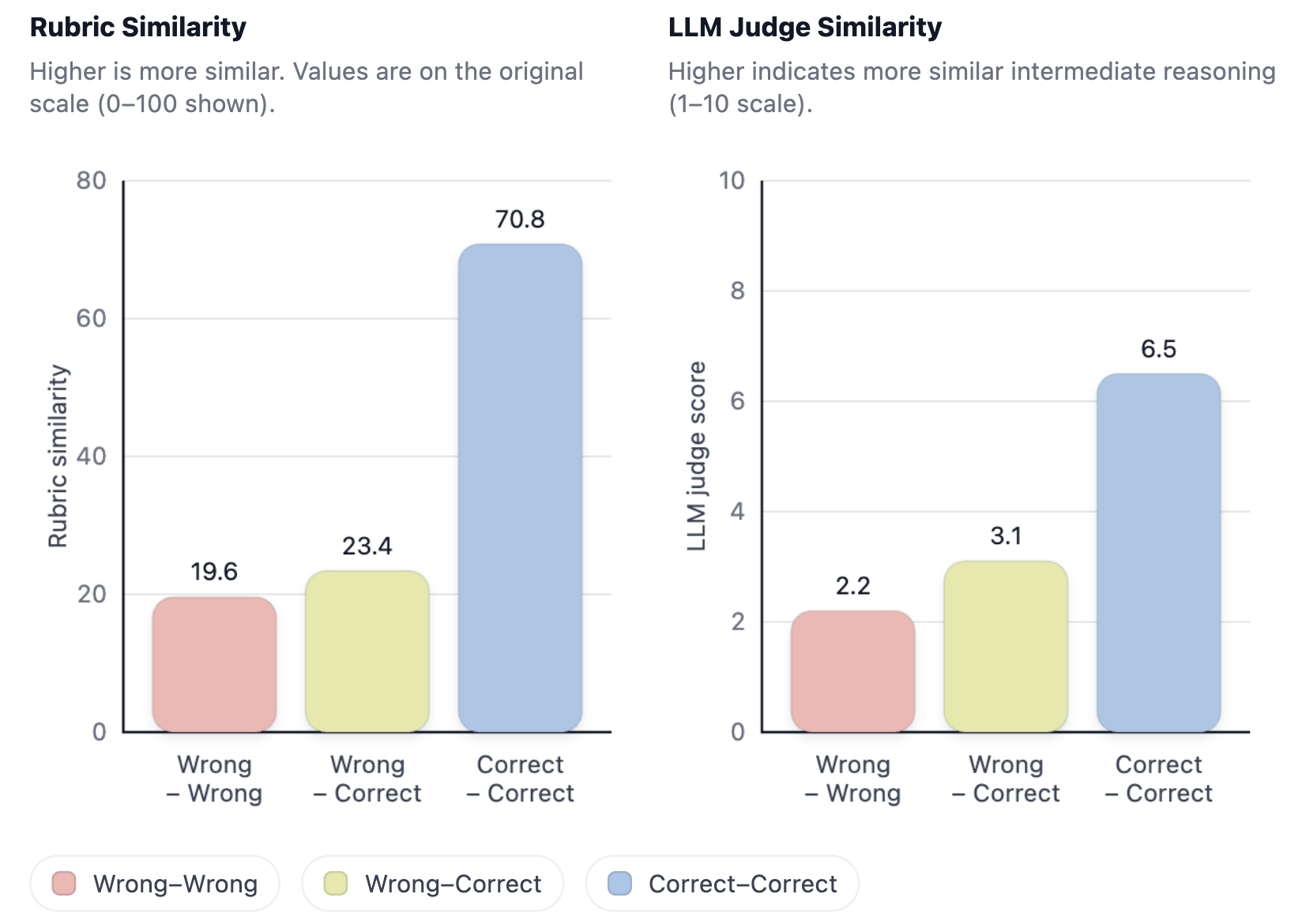

Correct trajectories share intermediate states

In this section, we investigate whether correct trajectories share similar solution steps. If such similarity exists, it provides a natural way to distinguish positive from negative samples, since incorrect trajectories are less likely to align with these shared intermediate states. This suggests that the degree of overlap with shared steps can be used to estimate whether a trajectory contains sufficiently correct reasoning.

To validate this hypothesis, we conduct probing experiments on 100 problems from BeyondAIME. For each problem, we sample 32 trajectories and extract intermediate reasoning results from each trajectory. We then quantify pairwise trajectory similarity using two complementary measures:

-

Rubric similarity (set-overlap): represent each trajectory by a set of extracted intermediate results, and compute Jaccard similarity: \(\mathrm{Sim}(a,b)=\frac{|S_a \cap S_b|}{|S_a \cup S_b|}\)

-

LLM-judge similarity: ask a strong judge model (

GPT-OSS-20B) to score the similarity of two trajectories’ intermediate results on a 1–10 scale.

Across both metrics, we observe a consistent separation.

The large gap between correct–correct and the other two categories supports the interpretation that there exist core intermediate states repeatedly visited by successful solutions, while unsuccessful solutions are more diverse and less aligned.

Parseable reasoning processes

Now we know that intermediate steps from correct trajectories are often shared and highly informative. But a practical challenge is that free-form chain-of-thought is not directly parseable. To make intermediate states extractable, we use a prompting strategy during generation: the model is instructed to enclose salient intermediate results in \underline{}. Concretely, we use the following instruction:

“Since your reasoning process will be evaluated step-by-step via intermediate results, you must enclose important intermediate results leading to the final answer within

\underline{}.”

In initial experiments, Qwen3-4B-2507 follows this formatting requirement unreliably, with fewer than half of generations containing the required number of underlined results. We therefore bootstrap a small supervised formatting dataset by prompting GPT-OSS-20B with the same instruction and sampling 20K problems from AReaL-boba-106K. We then fine-tune the 4B model with a max length of 16K on this dataset to improve adherence to the markup. We also release the fine-tuned checkpoint. After this step, intermediate results can be extracted with a simple rule-based parser that collects text spans inside \underline{}, enabling scalable downstream similarity and reward computations.

Process reward shaping

Motivated by our earlier analysis, we shape rewards using agreement with a per-problem repository of intermediate results from correct trajectories. As we accumulate many correct trajectories, the intermediate results that recur most frequently are likely to correspond to necessary subgoals, such as key lemmas, derived equations, or invariant checks. Therefore, even when a trajectory’s final answer is incorrect, producing intermediate results that match this repository should receive partial credit, providing a dense proxy for process supervision.

RL data construction. We start from AReaL-boba-106K and generate 8 trajectories per sample by prompting Qwen3-4B-2507 to generate the final answer together with intermediate results. We discard problems that are either too easy or unsuitable for our automatic evaluation, yielding 14K training problems.

For each remaining problem \(i\), we extract the underlined reasoning steps to form a per-problem offline consensus set \(\mathcal{S}\). We denote the consensus set for problem \(i\) as \(s_i\).

Online reward. During RL training, for any trajectory whose final answer is correct, we add its extracted intermediate results into \(\mathcal{S}\), continually increasing its size for better estimation. For trajectories with an incorrect final answer, we compute a process reward based on overlap with the offline set:

\[r_i^{\mathrm{proc}}=\min\left(0.5,\frac{|P(\tau)\cap s_i|}{|s_i|}\right)\]where \(P(\tau)\) denotes the set of extracted intermediate results from trajectory \(\tau\) for problem \(i\). The 0.5 cap ensures that incorrect solutions cannot obtain a reward comparable to fully correct trajectories, while still receiving a meaningful learning signal when they reach correct intermediate subgoals.

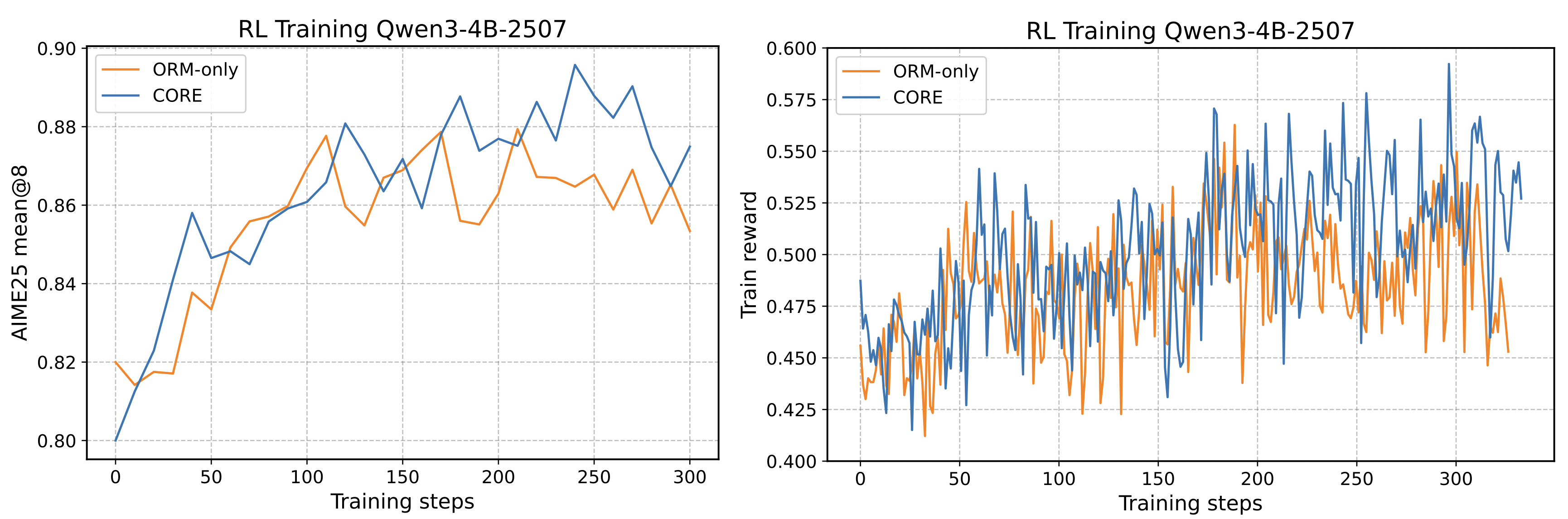

Figure 2 shows that process reward shaping leads to continued gains in both optimization signal and validation performance.

Data Filtering Principles in CORE

Why filtering by trajectory difference matters

After approximately 300 RL steps, the training reward and validation score plateau, and the policy stops improving. We try several exploration strategies, such as increasing the sampling temperature, adding entropy loss, and filtering out easy samples, but none of them yields obvious gains. We then explore whether process-level reward information can be used to design curriculum learning strategies that further scale RL training.

We define the difficulty of correcting a wrong trajectory as the degree of overlap between the incorrect trajectory and the correct one, because this overlap reflects how much of the reasoning process is already aligned with the target solution. If the incorrect trajectory shares many intermediate steps with the correct trajectory, then only a small part of the process needs to be revised, making the trajectory easier to correct. In contrast, if there is little overlap, the trajectory likely deviates from the correct reasoning path in a more fundamental way.

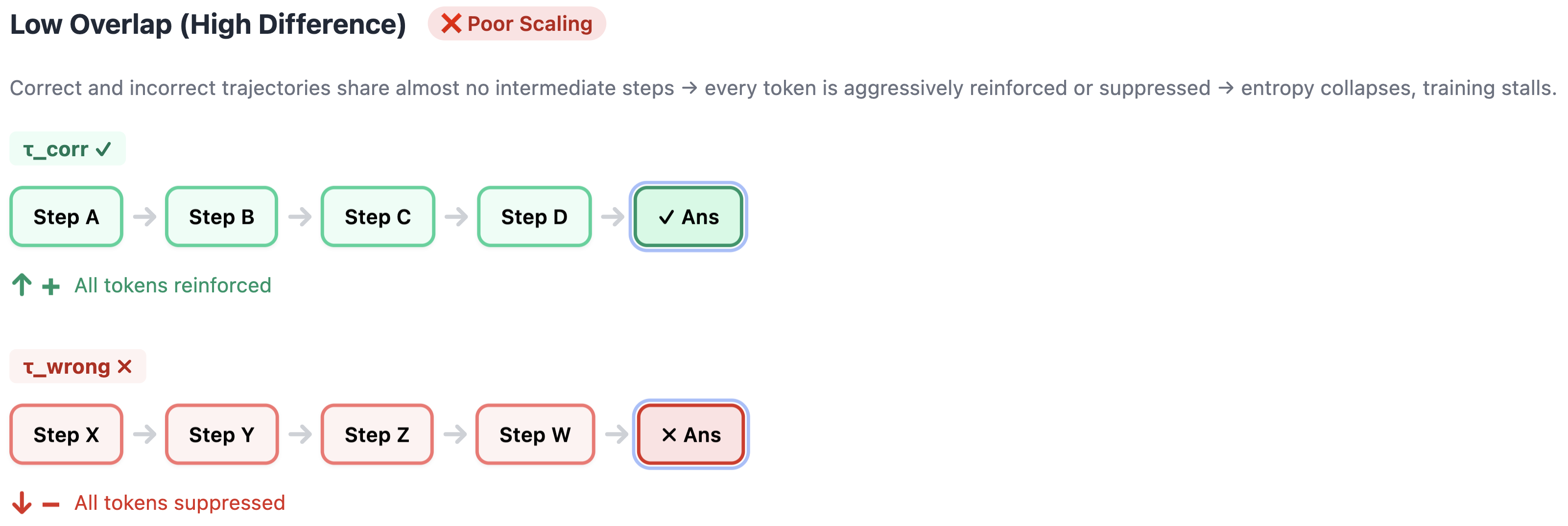

For each problem, we compare the correct trajectory \(\tau_{\mathrm{corr}}\) and the incorrect trajectory \(\tau_{\mathrm{wrong}}\), and partition the samples into two groups:

- Low-difference group: \(\tau_{\mathrm{wrong}}\) shares many intermediate reasoning steps with \(\tau_{\mathrm{corr}}\). The two paths diverge only at a few critical decision points.

- High-difference group: \(\tau_{\mathrm{wrong}}\) is largely unrelated to \(\tau_{\mathrm{corr}}\), with almost no shared intermediate steps.

Ideally, a curriculum trains on the low-difference group first and then gradually moves to the high-difference group.

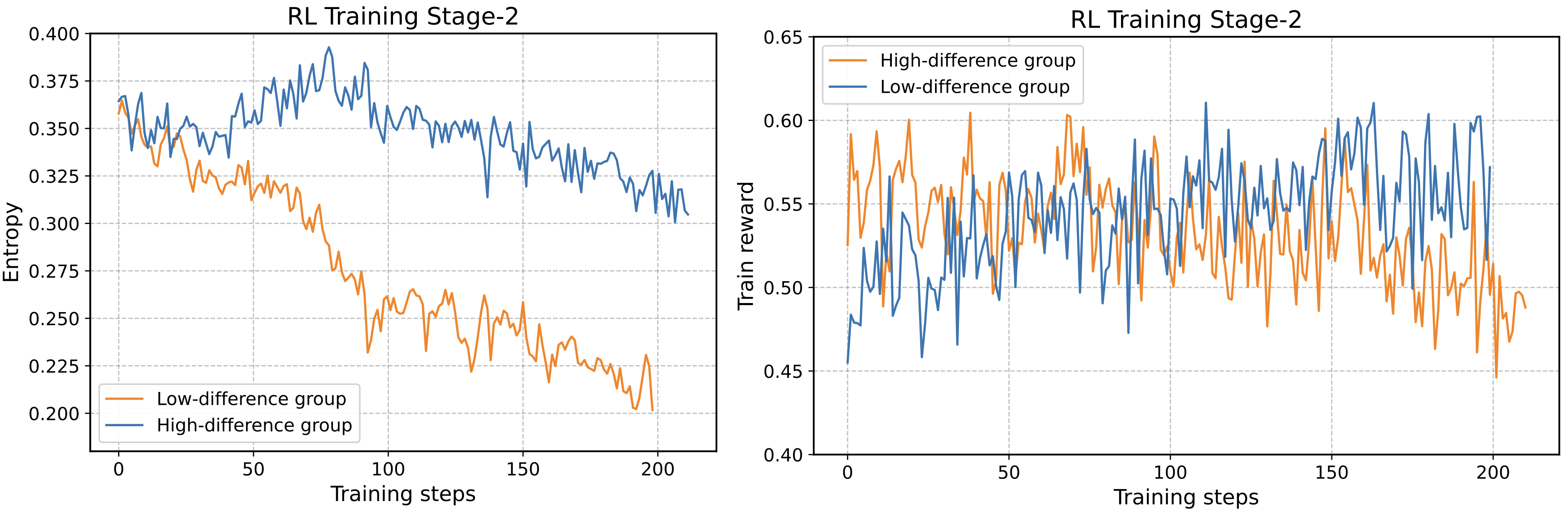

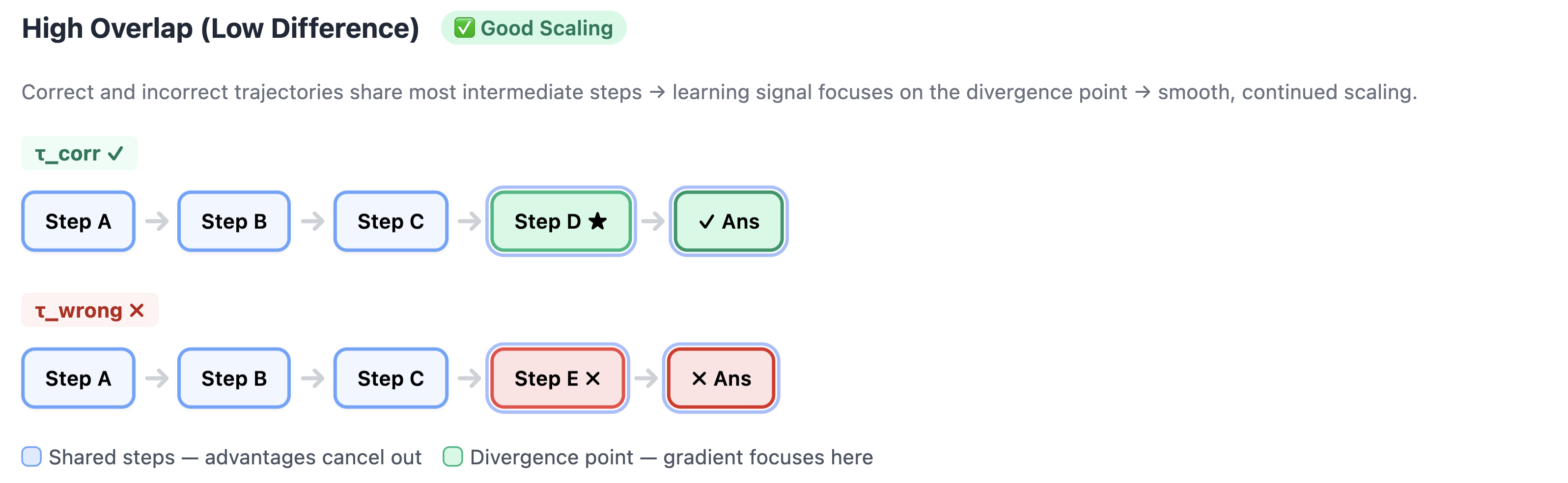

To compare the two groups, we take the model obtained after the first stage of RL training and continue training it separately on each subset.

The results are striking:

- Training on the low-difference group continues to scale smoothly for approximately 200 additional steps.

- Training on the high-difference group causes a rapid drop in entropy, while improvement in training reward quickly stalls.

The intuition may be rooted in how GRPO assigns credit.

Low overlap vs. high overlap

Low overlap (high difference). When

\[\tau_{\mathrm{corr}} = A \to B \to C \to D\]and

\[\tau_{\mathrm{wrong}} = X \to Y \to Z \to W\]share almost no subgoals, every token in the correct trajectory receives a positive advantage signal while every token in the incorrect trajectory is suppressed. This is a blunt, undifferentiated gradient: the model cannot identify which step actually caused the error. It is forced to wholesale reinforce or suppress entire trajectories, rapidly overfitting to narrow patterns. The result is entropy collapse and training stagnation.

High overlap (low difference). When the two trajectories share most of their reasoning path—for example, both follow

\[A \to B \to C\]before diverging into $D \quad \text{(correct)} \qquad \text{vs.} \qquad E \quad \text{(incorrect)}$ —the shared prefixes receive roughly equal positive and negative advantage signals that cancel out. The effective gradient concentrates on the divergence point: the critical step where the correct and incorrect paths split. This produces a precise, targeted policy update. Entropy decreases gradually, and training continues to scale.

Takeaway. Not all correct/incorrect trajectory pairs are equally useful for RL training. Pairs with high reasoning overlap provide a surgical learning signal: the model learns exactly which decision led to failure. Pairs with low overlap provide only a coarse binary signal that drives entropy collapse. Filtering for trajectory overlap is therefore a simple and effective form of curriculum design for reasoning RL.

Although our original intention was to conduct curriculum learning, based on this observation, in subsequent training we directly remove the high-difference samples from the RL training set. After applying this filtering, we resume RL for approximately 200 additional steps, and validation performance improves further.

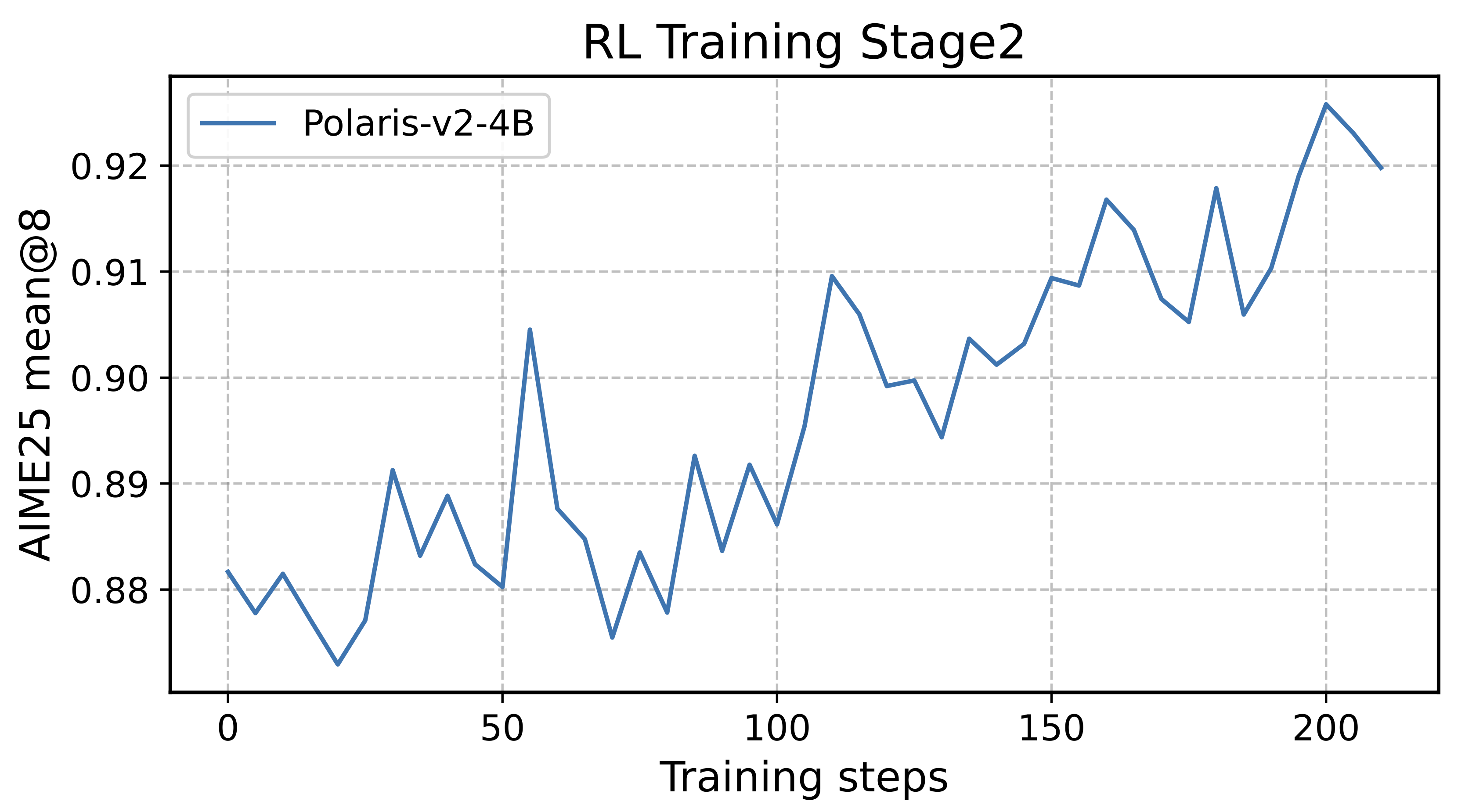

| Models | AIME25 | AIME26 | HMMT25 |

|---|---|---|---|

| Qwen3-4B-2507 | 80.9 | 78.9 | 55.6 |

| + SFT | 82.4 | 79.8 | 68.2 |

| + GRPO | 86.5 | 84.3 | 72.4 |

| + Process reward | 88.0 | 85.6 | 76.1 |

| + Data filtering | 91.8 | 89.5 | 80.0 |

Training Details

We use the VeRL codebase for RL training with a batch size of 64 and a rollout size of 8. Following the Polaris temperature scheduling strategy, we use a temperature of 1.2 in Stage 1 RL and 1.3 in Stage 2 RL. For inference, we recommend using a temperature between 1.2 and 1.35 with top-k = 20. We also use FP16 instead of BF16 during RL training to reduce the mismatch between the training and inference engines. We train the model with 32 H800 GPUs for 10 days.

2. 235B Model Training

In Polaris-V2, we not only train a 4B model, but also develop a 235B model under academic-level resources. Despite the difficulty of doing large-scale RL on large models, we achieve substantial improvements over Qwen3-235B-A22B-2507 on popular mathematical reasoning benchmarks.

Weak-to-Strong Training: RL on small, SFT on large

For small models, we can often push performance by designing more scalable RL algorithms. However, direct RL training becomes prohibitively expensive for very large models: most compute is spent on rollouts, and the cost further increases because hard math problems typically induce long chain-of-thought trajectories. With academic-level resources, end-to-end RL on a 235B model is close to infeasible.

Given this constraint, we adopt a weak-to-strong generalization approach. Instead of running RL on the 235B model, we first use RL to turn a smaller model into a strong specialist, and then transfer its behaviors to the larger model via supervised learning. Concretely, after careful optimization, our 4B model achieves large gains in mathematical reasoning and can be viewed as a math specialist. We then distill this 4B specialist Polaris-V2-Qwen3-4B into Qwen3-235B-A30B-2507 using 9K prompts from an instruction-following SFT dataset.

The distilled 235B model successfully inherits the reasoning patterns discovered by the 4B specialist, while also benefiting from the larger model capacity to achieve stronger overall performance than the small model.

Model merging to balance strengths

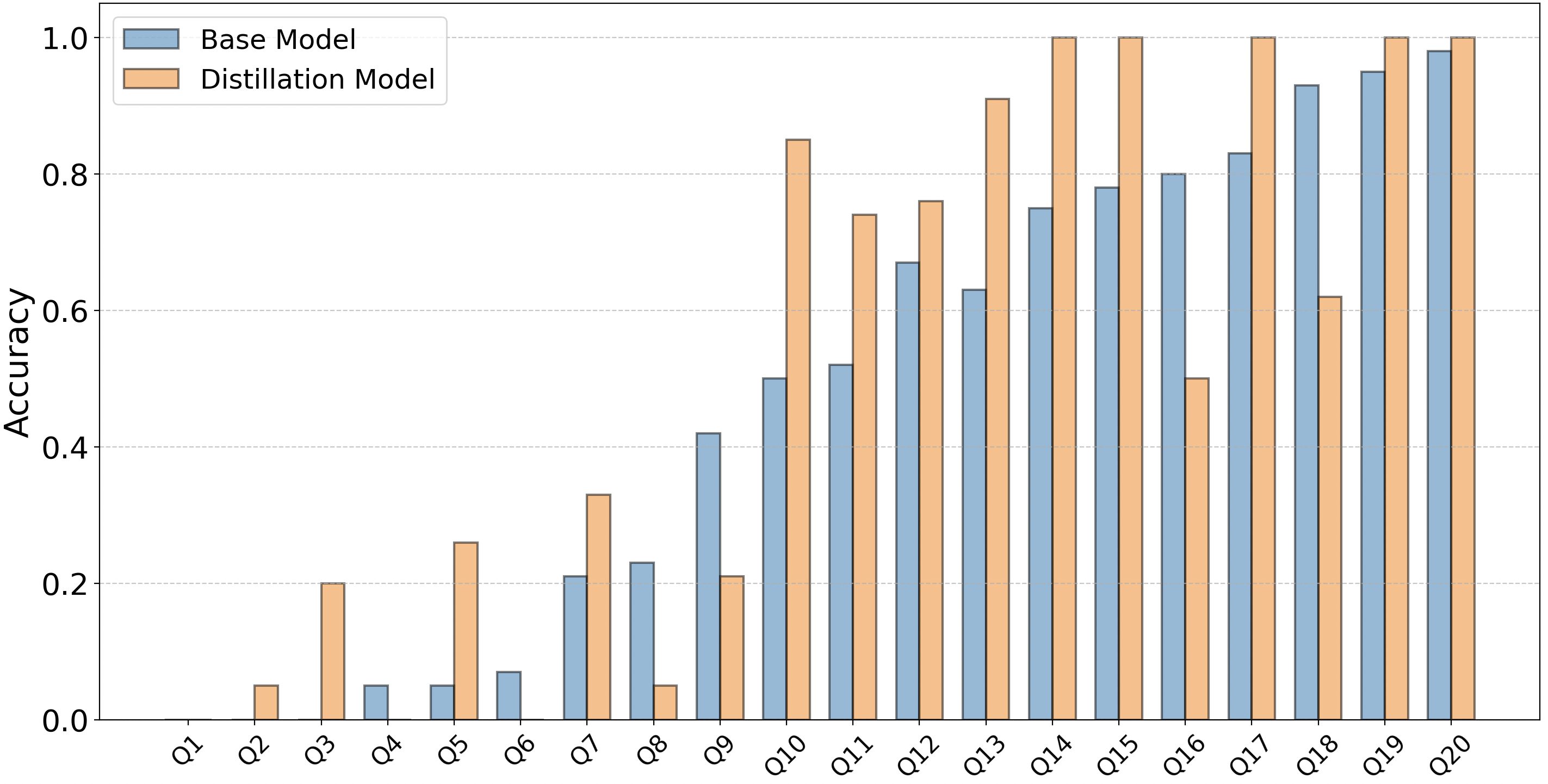

We further observe that the distilled model’s average score is not significantly higher than that of the base model.

| Model | AIME25 | AIME26 | HMMT25 |

|---|---|---|---|

| Base Model | 92.3 | 89.5 | 85.1 |

| Distillation Model | 90.0 | 91.4 | 88.2 |

| Merged Model | 94.2 | 93.2 | 90.5 |

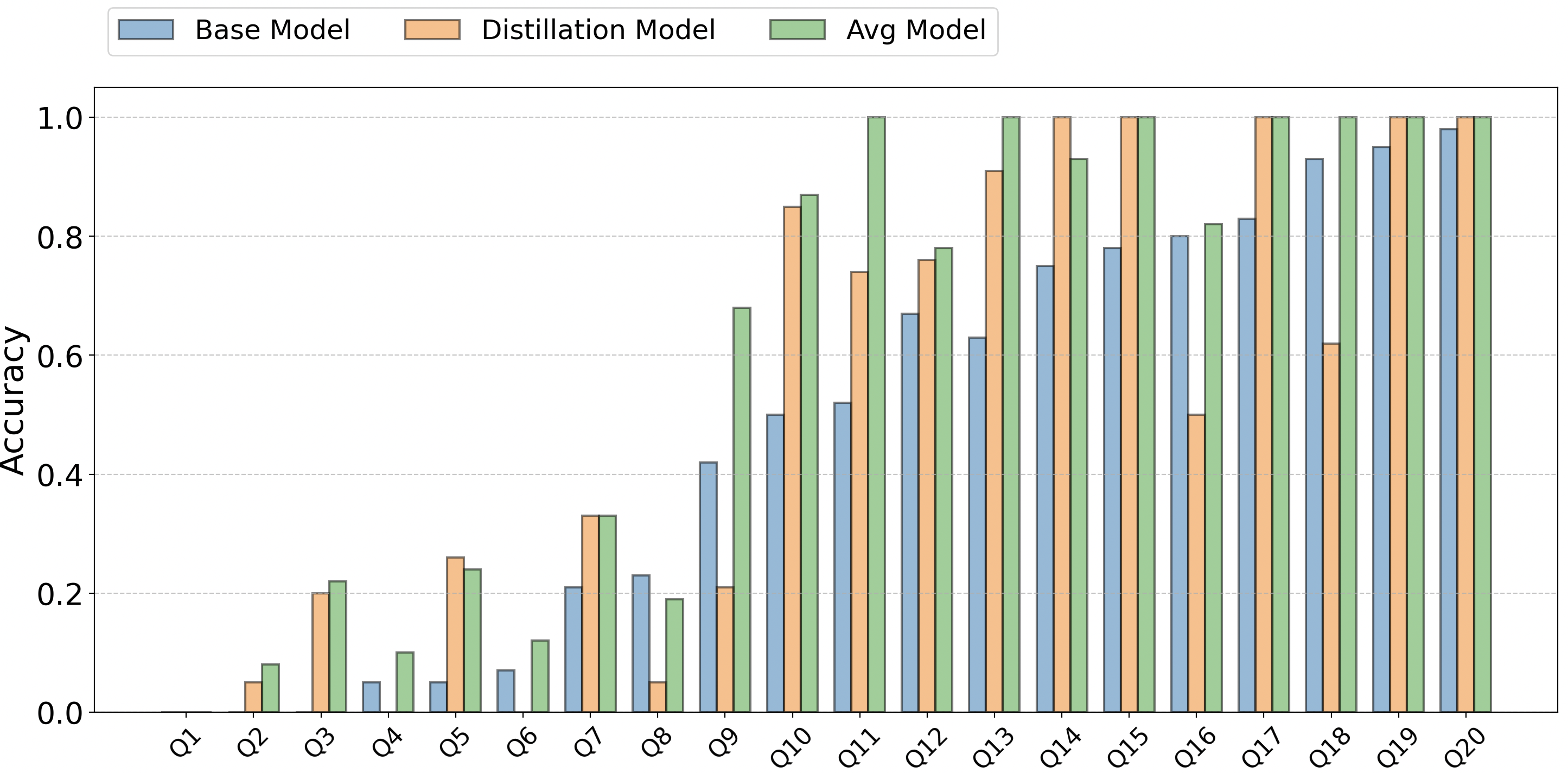

To better understand this phenomenon, we conduct a per-problem analysis of the accuracy differences between the distilled and base models. In Figure 5, we select the 20 problems on which the base model performs worst from each of the AIME25, AIME26, and HMMT25 benchmarks, and compare the accuracy distributions of the two models.

- On problems where the base model performs poorly, the distilled model can achieve higher accuracy.

- However, on problems where the base model is already strong, the distilled model may exhibit some degradation, offsetting its gains when averaged over the full benchmark.

In our experiments, the improvement is most pronounced on HMMT25, while the average gains on the other benchmarks are smaller.

These findings suggest that the two models possess complementary strengths. We therefore explore whether these strengths can be combined by merging the two models through weight averaging:

\[\theta_{\mathrm{merge}}=\alpha \theta_{\mathrm{base}} + (1-\alpha)\theta_{\mathrm{distill}}\]We find an interesting phenomenon: the merged model tends to absorb useful behaviors from both sides, improving performance across datasets rather than trading one capability for another.

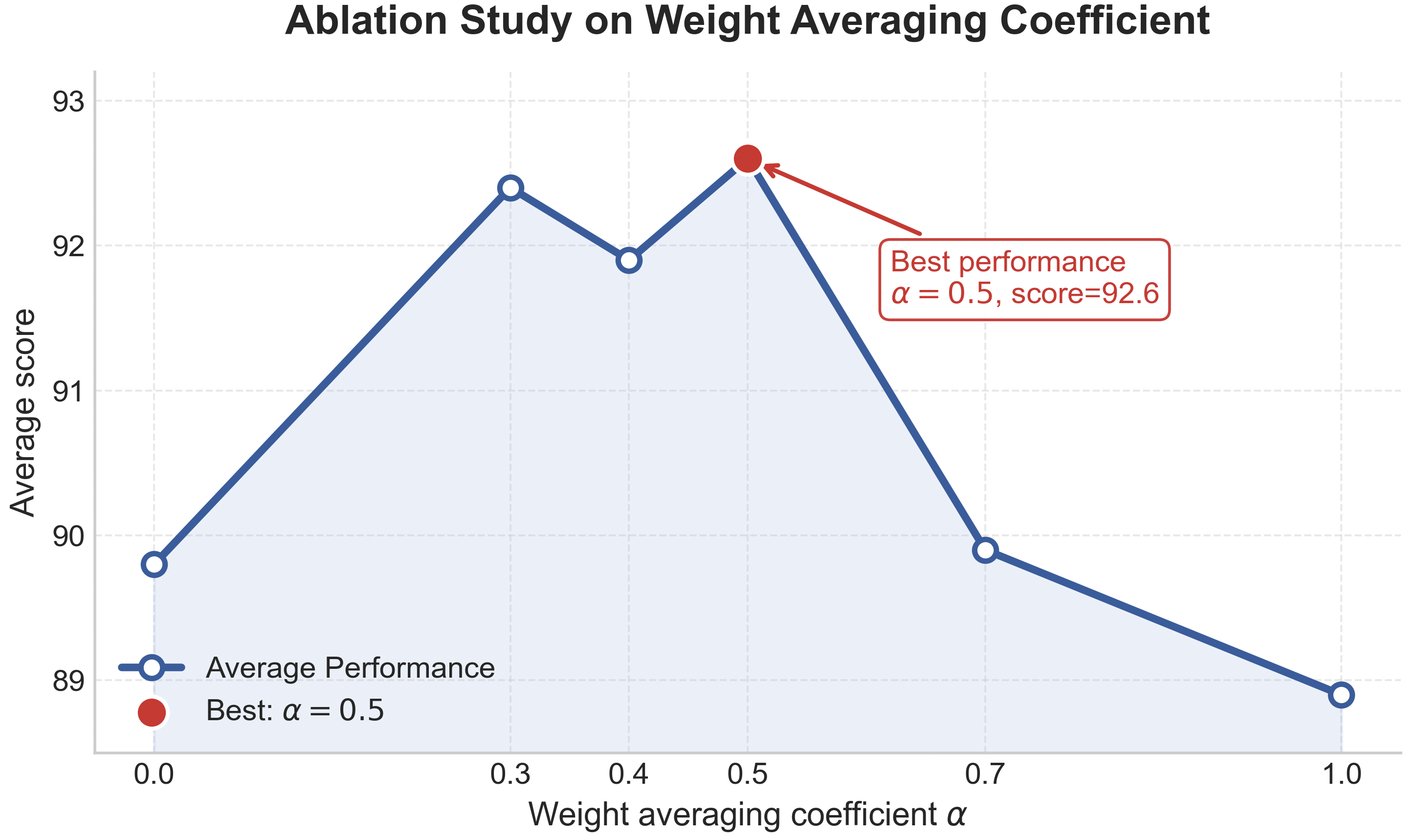

We conduct an ablation over the mixing coefficient \(\alpha\):

Results indicate that the best performance is obtained when \(\alpha \in [0.3, 0.5]\). We choose \(\alpha = 0.5\), i.e. an equal-weight merge, as the final released model.

Citation

@misc{Polaris2025,

title = {POLARIS: A Post-Training Recipe for Scaling Reinforcement Learning on Advanced Reasoning Models},

url = {https://hkunlp.github.io/blog/2025/Polaris},

author = {An, Chenxin and Xie, Zhihui and Li, Xiaonan and Li, Lei and Zhang, Jun and Gong, Shansan and Zhong, Ming and Xu, Jingjing and Qiu, Xipeng and Wang, Mingxuan and Kong, Lingpeng}

year = {2025}

}